SimQuality is the name of a research project, but actually much more than that. It stands for a conviction that simulation programs are valuable planning tools. And as with any tool, its quality also determines the product, in our case good, functional buildings with low energy requirements and the highest possible use of renewable energy sources.

Quality assurance of simulation software

From our own many years of experience in the development of simulation programs, we know that simulation development is by no means a simple task. Complex physical models and flexible parameter selection on the part of the user require equally complex program code. And this is only in very rare cases error-free right from the start.

Therefore, the most comprehensive testing of the computational functionality of any simulation program should be performed. A tested and certified software offers many advantages, among others:

- increases confidence in the simulation tool and the simulation results

- creates comparability between different software products or simulation programs with similar tasks

- allows standard-equivalent planning, dimensioning and verification

The latter point in particular is very important in the very standards-driven planning process in Germany, because the development and updating of standards is a tough process. It therefore sometimes takes a very long time (> 10 years) until the state of research/technology is reflected in the standards. And we should certainly not wait that long in view of the pressing challenges of climate protection and the necessary rethinking in the construction industry.

Vision and objective of SimQuality

We are convinced that simulation software allows us to design, construct and operate better and more energy-efficient buildings. And these are usually even more economical due to lower investment costs and, above all, operating costs. If, instead of a static load analysis, a dynamic simulation helps to map the processes more accurately, and thus, for example, several bored piles of a geothermal installation can be saved, then investment costs are significantly reduced. Similarly, simulation-based, realistic dimensioning of pumps, pipes, storage systems, etc. usually leads to smaller components and thus lower investment and, above all, operating costs (and, of course, lower energy consumption and CO2 emissions).

SimQuality is intended to facilitate the use of high-quality and model-standardized simulation software in the planning process and establish it on a broad scale. This is to be achieved through the following mechanisms:

- The website collects and clearly presents information about available simulation tools and their capabilities.

- Simulation tools go through test series and are evaluated according to their capabilities (and graded similar to Stiftung Warentest).

- Simulation models are documented in detail and parameterization is described.

- The test cases of the SimQuality test series document the setup and parameterization of simulation models (and program input) and thus also support the learning process for using the programs.

Those interested in simulation should find answers to the following questions on this website:

- Which tools are suitable to handle a specific task/problem?

- What are the capabilities and qualities of a particular simulation program?

To ensure that these questions can be answered sufficiently objectively, we have established a set of evaluation rules for the use of the platform.

Rules

In the SimQuality research project, we have developed a set of rules for testing simulation programs:

- Check results are stored for a combination of program/library, version, and checker/result provider.

- Only results for published software are allowed, i.e. specific program adaptations only for the purpose of validation are not allowed. The tested programs must be accessible to planners/engineers on the market.

- The results must be reproducible by third parties. This can be achieved most easily by providing the simulation input data (this will earn bonus points in the evaluation). Additional documentation of the data input/modeling can also help to facilitate reproducing the data.

The specification of the version allows to distinguish between different development stages of a software.

The specification of the tester/test data provider allows to consider different competences/different detailed knowledge of simulation users. Thus, a developer of the software certainly has a greater detailed knowledge and may be able to use undocumented/unknown special functions that a „normal“ engineer may not know. But this also has the advantage that the use of these functionalities is explained and thus made known in the test series. And thus this also helps all other users of the software.

Testing methodologies

In addition to regression tests and module/unit tests, performing test series and comparing simulation results against reference values is important for the quality assurance of a calculation program. Unfortunately, the formulation of test series and test criteria alone is quite difficult. The topic has been taken up in numerous publications and there are also already some test series.

There are fundamentally different testing methodologies to check a model for correct functionality. Depending on the method and author, a wide variety of terms are used here, such as validation, verification, plausibility tests, benchmarking, etc. In our opinion, a clear definition is not really possible. We generally use the term validation as a synonym for functional testing.

Common test procedures are:

- Comparison between simulation results and analytical solutions,

- Comparison of simulation results with measured values, and

- Comparison of simulation results of several programs/models among each other.

Analytical solutions

The comparison with analytical solutions is de-facto not possible with today’s tasks and dynamic simulation programs. Analytical solutions can only be constructed for highly simplified special cases. However, these are no longer relevant in practice due to the simplifications and statements from an analytical validation cannot be generalized as a rule. Analytical (approximate) solutions for steady-state thermal bridge calculations are an exception.

Comparison with measured values

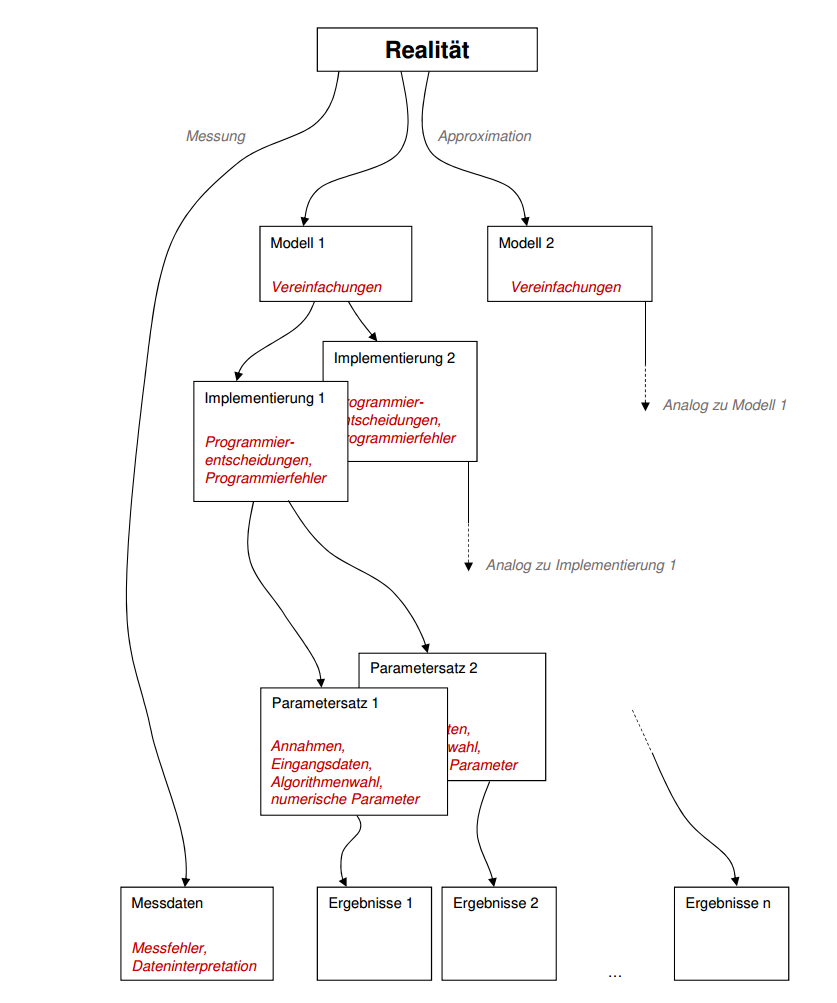

The comparison with measured values/monitoring data seems obvious at first glance. However, if one takes a closer look at a validation, manifold problems and sources of error arise.

As a rule, simulation programs are not used to design the monitoring or measurement data acquisition. I.e. it is not checked a-priori whether the collected measurement parameters are at all sufficient and suitable to validate a simulation model. Instead, measured values are usually acquired first and then an attempt is made to match the simulation model with the data (or certain measured values are taken from third parties).

Of course, this causes the problem that certain free values for parameters remain, which can then be used to calibrate the model. A common criticism with this approach is that the simulation results are „fitted“ to fit. Thus, such matching of simulation results with measured data merely provides evidence that the simulation model is in principle capable of reproducing the complexity of measured reality (including potential measurement errors).

Numerous sources of error must be taken into account and minimized during the acquisition and evaluation of measured values:

- Measurement errors in measurement hardware/calibration of sensors/sensor drifts etc.,

- Placement of sensors (e.g. significant temperature differences in a room air volume can be measured if the sensor is placed in different positions: suspended in the center of the room, at an outside wall corner, inside wall surface, rather above or below, near a window/ or exposed to solar radiation, … ; differences of several Kelvin are possible here)

- Interpretation of measured data (room air temperature vs. operative temperature; global radiation or predominantly diffuse radiation due to self-shading/foreign shading; surface temperature or temperature in/above the boundary layer; …)

Especially the latter has to be considered critically when comparing measured data with simulation results. Simulation models work with simplifications and idealizations of the built environment (e.g. assumption of perfectly mixed room volumes, or neglect of thermal bridge effects and assumption of homogeneous surface temperatures, etc.). Accordingly, measurement data or simulation results must be checked to see if they both provide the same information. Especially when using published measurement data without detailed information about measurement acquisition, sensor placement etc. the result of a comparison with the simulation has to be seen critically.

Alternatively, sensor placement can be done directly with the aim of validating models and eliminating certain disturbance variables directly. For this reason, it is actually always recommended to check the planned monitoring in advance with the simulation model and to make an appropriate sensor selection and determine measurement methodology with the simulation.

Comparison of different models and implementations

Another form of validation is the comparison of different simulation models with each other. If the models to be compared cover a similar task area and are to fulfill similar accuracy requirements, one may expect a sufficiently large agreement of the model results. However, this requires some preconditions:

- the models must be comparable in terms of model complexity and effects represented,

- the models must use the same or at least similar parameters; or there must be clearly defined and unambiguous conversion rules between model parameters,

- the result variables must be comparable (already the comparison of hourly mean values of temperature and instantaneous values of temperature can lead to larger deviations depending on the application),

- the numerical algorithms must be appropriate to the mathematical formulation; too strong simplifications of equations for the purpose of implementation must not lead to approximation errors (e.g. use of stationary equations for strongly dynamic processes; too large averaging intervals etc.); also comparable numerical parameters must be used if they have an influence on the results (e.g. grid detail levels in FEM/FVM methods etc.)

- the software implementations must not contain errors

In reality, it is difficult to fulfill all these conditions. Accordingly, a model-based comparison between different programs for a selected realistic application scenario sometimes results in a large number of result variants, as shown below:

Homogenization of model-model comparisons

There are various ways to reduce the range of variation in model-model comparison. Based on the requirement for equivalent applicability of the models, different scenarios can be defined in which model components are specifically tested. Complete tests in which many model components are active at the same time always bear the risk that error sources in individual model components are washed out by other physical effects, or that different errors compensate each other. Therefore, it is more effective to perform individual component tests in which specific model functionalities with a range of parameters are tested and compared. The parameters to be used are specified very precisely, and the physical model to be used is also described in detail according to the result requirement.

When using such component tests, programming errors or model accuracy problems can be identified directly. Correct model results often lie within a very narrow corridor in these individual tests, which facilitates the definition of validation criteria.

This approach of model-model validation through individual component testing is followed by SimQuality in the SimQuality (2020) test suite.